AI Content Integrity: Solutions for Disinformation and Deepfake Detection in November 2025

Content integrity is a top AI priority in November 2025, as generative models spark waves of synthetic media, deepfakes, and misinformation.

🔍 Why AI Content Integrity Is Trending Now

- Rapid advances in text-to-image and text-to-video tech have produced realistic synthetic content posing risks for brand trust, elections, and regulation.

- New EU AI Act compliance deadlines and policy enforcement require transparent provenance and robust watermarking.

- Platforms are rolling out real-time detection tools, blockchain verification, and AI-powered moderation for user-generated content.

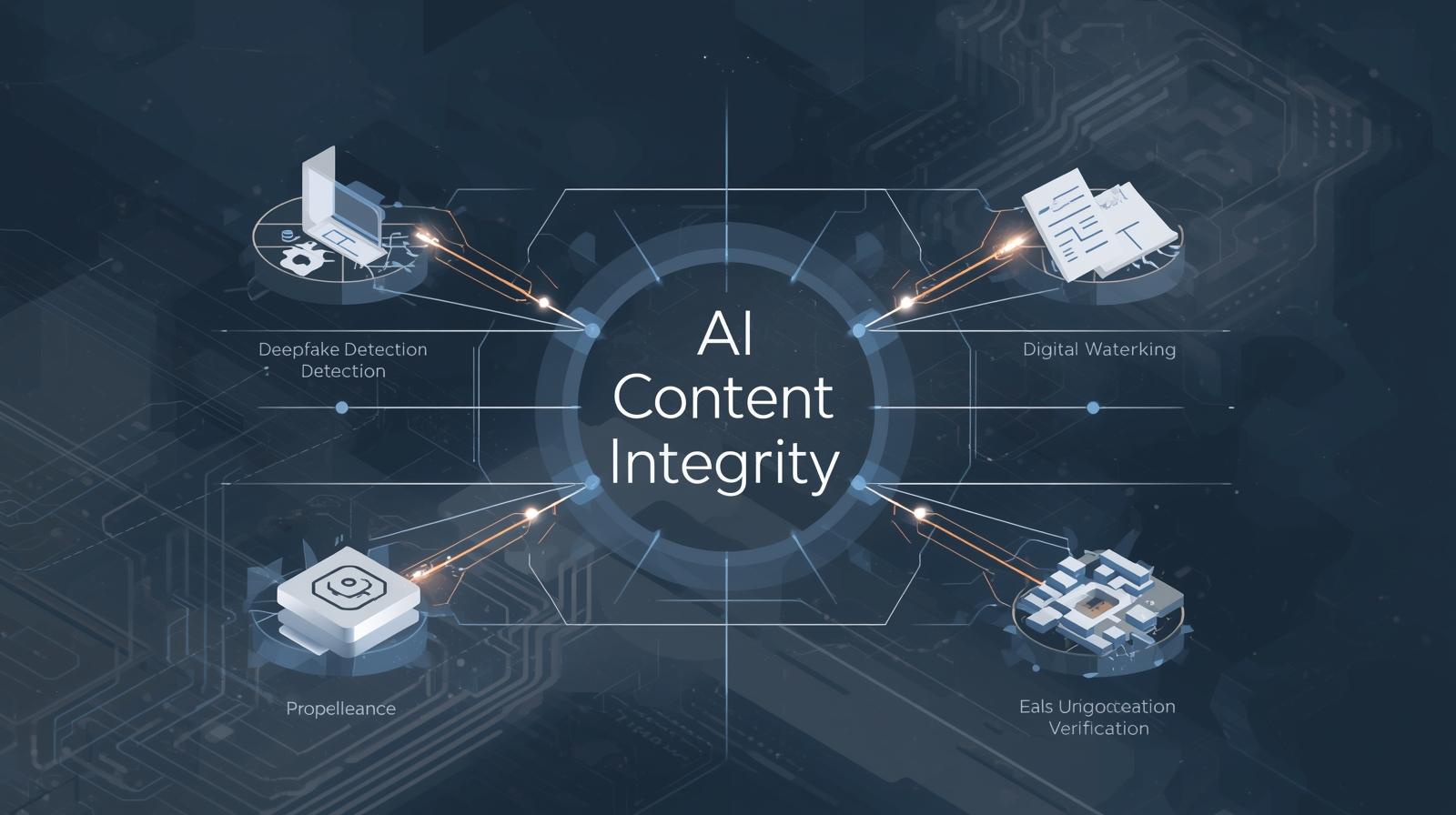

⚙️ Key Technologies and Solutions

- Deepfake detection: Tools analyzing voice, face, and metadata to flag synthetic media.

- Watermarking & provenance: Visible and invisible signals track source, edits, and ownership.

- Automated moderation: AI models filter fake news, flagged assets, and policy violations.

SEO keywords: AI content integrity, deepfake detection 2025, synthetic media risk, EU AI Act compliance, watermarking AI, provenance verification.

Ready to implement this for your business?

Our team at Caxtra specializes in AI-powered software and web solutions. Let's build something great together.

Caxtra

Company

Related Articles

Mid-2026 AI Trends Outlook | Agents, Physical AI & Future Predictions

Comprehensive mid-2026 AI trends analysis covering agentic AI, physical robotics, governance, and strategic recommendations for businesses.

Creative & Marketing AI Suites May 2026 | Campaign Agents, Content & Brand Tools

Best AI tools for marketing and creative teams in May 2026. Covering campaign automation, content creation, design, and performance optimization.